melonDS RSS

open_in_new https://melonds.kuribo64.net/rss.php

The latest news on melonDS.

Feed Info

[2026-05-14T16:47:04.027Z] Updated feed with 10 items

[https://melonds.kuribo64.net/rss.php] groups = Blogs

Copy Link

Grid View

List View

Flow View

Expect slowdown -- by Arisotura

A bit of a status update since the last post...

"I've been taking a bit of a break from melonDS lately... mental health reasons, a silly side project, the usual stuff."

More of the same stuff, but add to it that I've been getting started in this new job.

While it's been nice to have time to myself in order to deal with mental health matters, unemployment gets old after a while, so I was eager to finally start this job.

If you remember, I've had a foretaste of it back in December, too. I've seen my ADHD self thrive in that setting. The job is largely about repairing A/V equipment. This feels like a much better fit for my brain than a regular developer job. I like to code, but that's not all I do: I also like to build, repair things, and in general, understand how systems work (or design them). I consider code to be much of the same, but it's all inside a computer instead of being a physical object, so it lacks the "doing things with my hands" aspect.

A related example here: last year, I repaired buttons on a vacuum tube radio as part of a larger project. This is the kind of small project where I have a blast: using my brain to figure out a solution, actually implementing it with my hands, doing my best to adapt to how things actually work out... It makes me feel alive like nothing else.

But I digress. The flip side of this is that the longer work day and commute time leave me with less time at home. On the other hand, this job is a particular arrangement where I'll be working half of the year, which will definitely give me time for my personal projects, including melonDS. Just, won't be now. The first work period is going to be 6 months.

-

This also means having to balance work and mental health.

I feel better equipped to handle this, and I can envision the possibility of long term happiness. But there's still a long way to go. I also find this interesting. Probably because there's the same "figuring out how a system works" aspect, but with my own psyche. Instead of being cold logic, it's very emotion-driven.

I find the whole topic very interesting too! I would write about it (not here), I like to write about things and I feel that it could inspire other people, but I don't want to get too personal either.

-

This most likely means there won't be much progress on melonDS until November, atleast from me.

Part of me wants to figure out something for ARM9 timings. But realistically, I should focus on finishing what needs to be finished for melonDS 1.2. Polishing the OpenGL renderer. Maybe furthering the codebase cleanup. Those things.

I'll do my best.

A bit of a status update -- by Arisotura

I've been taking a bit of a break from melonDS lately... mental health reasons, a silly side project, the usual stuff.

Regardless, I've been rethinking my plans for melonDS.

I think I might postpone the timing work to melonDS 1.3. It's bigger than anticipated. I want to address some other issues, most notably finishing the new OpenGL renderer, and release melonDS 1.2. There's also some codebase cleanup I want to do.

Regarding the timing work, it's going to take some planning, and possibly a larger rework.

As I've said, the ARM7 is simple enough. Things are pretty much sequential, and the only extra complexity comes from main RAM burst termination delays.

The ARM9 is where it gets hard. We deal with mechanics like cache streaming, write buffering, ability to keep running code while the bus is in use... All sorts of mechanics which highlight the limits in melonDS's current model.

For example, take the LDM and STM instructions. They do multiple consecutive memory accesses in a row. They're commonly used to push registers to the stack and pop them back, or to quickly clear or copy large blocks of memory.

There are several issues arising from this. Depending on the base address, a LDM/STM instruction might cross the boundary between two memory regions, or two MPU regions on the ARM9. STM, when accessing I/O registers, might also cause a bus stall between two memory writes (for example, if it starts a DMA transfer...).

melonDS was built on top of a plain old interpreter. We read one instruction word from memory, we use a lookup table to figure out what we should do, we do it, then we move on to the next instruction, and so on. This model treats individual instructions as atomic: there is no way to account for all possibilities with LDM and STM, for example. Or atleast, not easily.

So an idea I have in mind is to try a different model for CPU emulation. A sort of cached interpreter.

The idea is to turn DS code into an intermediate representation which not only enables faster execution, but also gives us more freedom. For example, the aforementioned LDM/STM could be turned into discrete memory accesses, or optimized into larger accesses if possible.

Another reason would be timing calculations. Some of them, like interlocks, can be annoying to resolve, but only really need to be resolved once, so a cached interpreter could have them precalculated.

At this stage, this is only a basic idea, but it's something I want to experiment with.

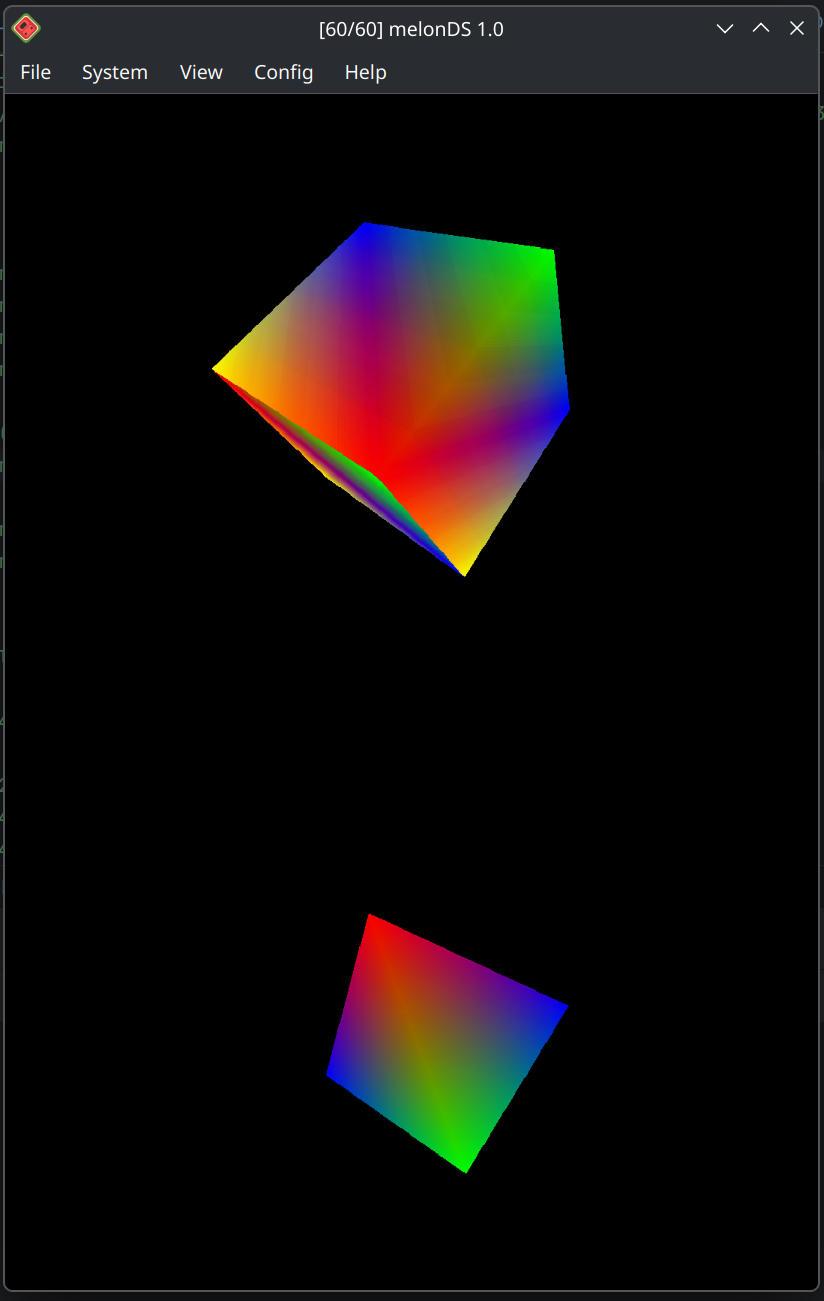

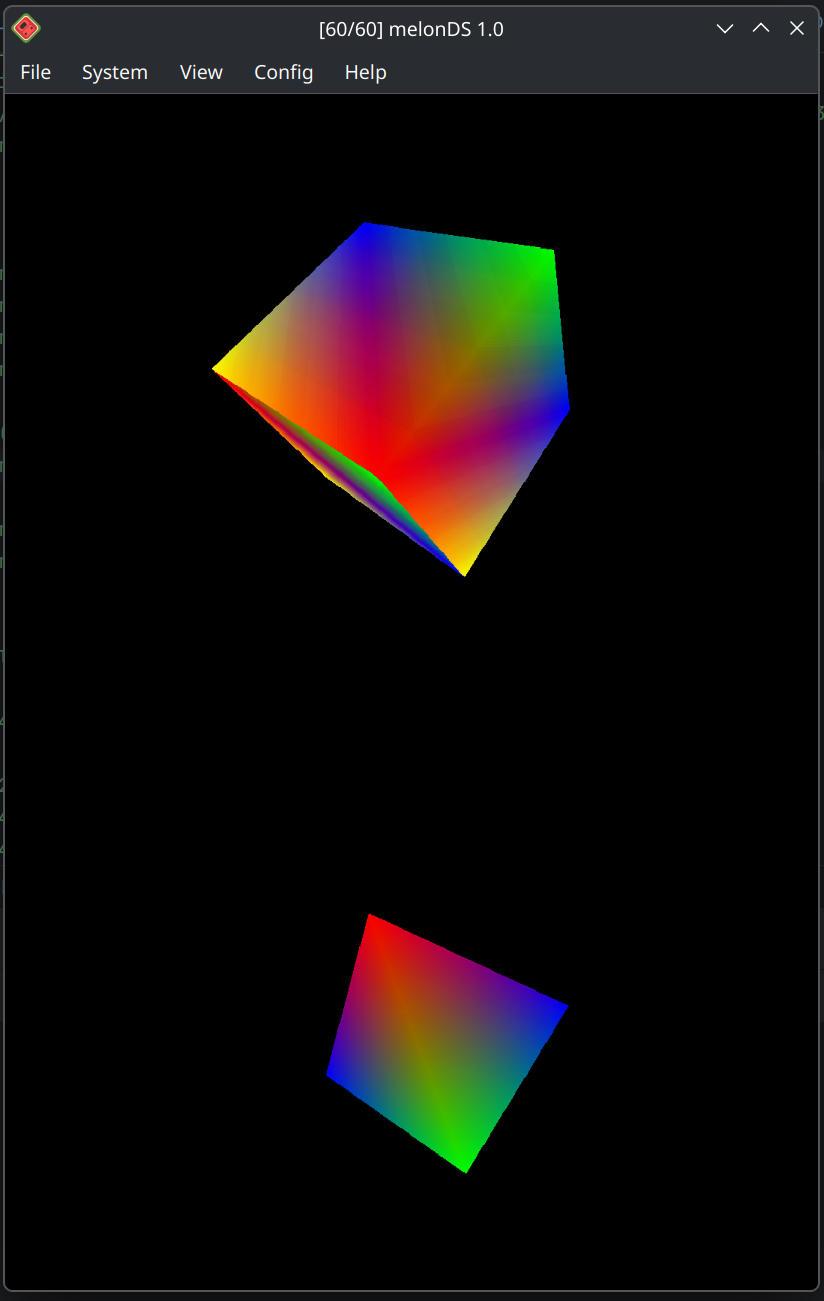

New demo: Emulator Examination -- by Arisotura

Emulator Examination is a DS demo which has been released recently, and has been programmed by PoroCYon.

I figured this would be an opportunity to write about something other than CPU timings for once! But first, a video of this demo, comparing the hardware results against melonDS.

This demo bends the DS hardware in creative ways. You can read PoroCYon's writeup here, but I figured I would write about my perspective too.

First of all, this is a DSi demo, which limits the opportunities for emulator comparison. As of now, melonDS and NO$GBA are the only emulators with some degree of DSi support. The demo will still run on a DS, but you'll be limited to the first test, since this one isn't DSi-exclusive.

Now, let's see what we got here.

First, we have an eyesight test. At first, I had no real idea what was going on here. Basically, the PPU mode is changed in the middle of a scanline, which causes very specific glitches.

The interesting part is that this mirrors how hardware reverse-engineering works: doing unintended things with the hardware, observing the results and figuring out the logic behind them, can offer insight into how the hardware works beneath the surface. It can also confuse you to shit (hi, 3D GPU).

However, this effect is unlikely to ever work correctly in melonDS. Emulating it requires understanding and accurately modelling how the PPU works at a cycle level, while melonDS employs a scanline-based renderer. And since no games out there try to modify PPU registers during a scanline, there are no provisions for supporting that.

This would be right up Jakly's alley, with DualSOUP. fleroviux has done a lot of research on the GBA PPU's cycle-level operation, which will likely be helpful for this.

The second test displays an animated rainbow pattern on a monitor. What's causing melonDS to struggle so much with it?

My immediate observations were that: 1) it uses timer-driven NDMA transfers, and 2) it uses custom DSP code, hence the poor performance.

The first part wouldn't be very difficult to implement. I have never implemented this NDMA transfer mode because I haven't yet encountered a use case for them, and haven't yet gotten around to write test ROMs, but it's probably not very difficult - just not high priority.

The second part is where this stops. This is more racing-the-beam shenanigans: the DSP sets up a DMA transfer to the first entry of the BG palette, and that's how the rainbow effect is created. So even with proper support for timer-driven NDMA, it still wouldn't render properly on melonDS.

The third test simulates a basic hearing test: it produces beeps that match the little 3D animation. melonDS outputs nothing at all.

This one is cheeky. I had a feeling I knew what was going on there, and I was right.

I talked about the DSi audio hardware here. The DSi uses a TI audio amplifier slash touchscreen controller. This chip has quite a bunch of audio processing features, one of them being... making beeps. There's a bunch of registers for this: you set the desired length in samples, some values derived from the desired frequency, and it will output a sine wave.

When I looked at the console output in melonDS, I could see repeated accesses to unknown TSC registers, and sure enough, those are the beep registers.

It brings back memories: the Wii U gamepad uses a similar audio amplifier chip, and it also has those beep registers. When I started reverse-engineering the audio system there, I used those to validate that the audio amplifier was configured correctly.

Anyway, this is... interesting. On one hand, it would be interesting to try to emulate this. On the other hand, it wouldn't be terribly useful - nothing else ever uses the beep registers. But it's within the realm of what I consider feasible in melonDS.

In a similar vein, the current TSC support in melonDS is pretty lackluster, but once again, nothing ever changes the TSC configuration outside of specific situations (like entering DS mode). The TSC is configured once and everything else, like volume control, is done by other components. Even the audio frequency switch is implemented by changing the TSC input clock - none of the TSC configuration is affected.

The fourth and final test is a series of CPU tests. But wait, how could melonDS fail those so egregiously?

The DSi embeds a new wifi card based on the Atheros 6k chipset. Unlike the old DS wifi card, which is powered by a fixed-function chip, this Atheros wifi card is powered by an Xtensa CPU. Modern wifi cards work similarly: you have an embedded CPU functioning as the brain of the system, controlling the 802.11 radio, the power management systems, and the various other peripherals. This CPU runs firmware which exposes a high-level interface to the host driver, and handles all the complex low-level details.

In this case, our demo is running custom code on the wifi card's Xtensa CPU.

Hence why it's utterly and completely failing. It's interesting that they were able to even get past this test in the video-- when I tested the demo on melonDS 1.1, it would get stuck on the last subtest.

It's a bit of a shitty situation, here.

On one hand, it would be an interesting technical challenge to get this running.

On the other hand, I'm not looking forward to emulating the entire wifi card. And even if we ignore that, the DSP situation should tell you why the wifi card firmware is HLE'd.

Then we get to the credits screen, which uses more beam-racing to do a shimmer effect. Because of course.

All in all, I appreciate the display of creativity in how the hardware is used. It's always interesting to figure out how those things work.

At the same time, this shows the limits to emulation. It's a balancing act between what is fun and what is practical to do with a given approach.

For example, see the fourth test up there, the whole "running custom code on the wifi card" bit. If games were doing that on a regular basis, and with enough variety, it would make sense to emulate that entire CPU.

Compare to the DSP situation. We have low-level DSP emulation, but it's slow. Even if there could be room for optimization, there's only so much we can do when emulating an entire subsystem. On the flip side, all the games that use the DSP use a limited set of ucodes, which makes HLE feasible. The issue is that it fails to cover custom ucodes, like our demo here is using. For this, it would make sense to look into some sort of recompilation, JIT or AOT (ahead of time). While commercial games were constrained to a limited set of official ucodes, homebrew has no such limitation. HLE wouldn't be practical unless specific ucodes became common (for example, by becoming part of homebrew toolkits).

As far as the wifi card is concerned, it's a no-brainer: every game is going to use the same wifi firmware, which was already set up by the DSi firmware, so it just makes sense to HLE it.

So, basically, while there's room for improvement in melonDS, I doubt it will ever run this demo 100% correctly.

The timings saga, ep 2 -- by Arisotura

At this point, this might as well be its own little saga... albeit not as exciting as the local multiplayer one, atleast on the surface. What is exciting, though, is the possibility to deal with timing issues once and for all.

Maybe not. We can't get it 100% perfect without cycle accuracy. But we can get as close as possible, and then see how much leeway we have. It should be easier to figure this out with a more correct model. When your timing model is fundamentally inaccurate, you can tweak the numbers, but that's a game of whack-a-mole: it fixes some bugs and causes other bugs. This is what the "Enable Advanced Bus-level Timings" option in DeSmuME does. The cache timing constants in melonDS, if they could be modified by the end user, would yield similar results.

Fun fact, melonDS's current timing model is flawed. Due to a few bugs, it doesn't even work as intended. But even if it did, it would still be inaccurate.

DesperateProgrammer's cache PR, aka PR #1955, is a pretty solid base for cache emulation. I think it's a good sign that it alone has showed promising results, despite being based on the same fundamentally flawed timing model.

Anyway, enough talking. Let's see where we're at today.

So far, I'm mostly done with ARM7 timings. I've been collecting timing numbers, figuring out the logic behind those with Jakly's help (thanks there!), and implementing it. In my timing tests, melonDS yields the same numbers as hardware, so that's nice. It feels pretty satisfying when you figure out the logic and everything clicks into place.

I still need to doublecheck everything, and do actual real-world timing tests, to make sure I have everything right.

However, as far as CPU timings are concerned, the ARM7 is only the appetizer.

The timing model is fairly simple. The ARM7 doesn't have internal memory and only has one bus, and everything runs at the same frequency, so most timings are fairly straightforward.

Things get more complex when main RAM is involved.

To understand how this works, it's helpful to consider how the RAM itself works. There are atleast two possible RAM chips for the DS, as well as different, bigger RAM chips found in devkits and such. Since those are likely to have different limitations, the DS's memory controller needs to adhere to the lowest common denominator in order to ensure consistent operation.

GBAtek states that the access time for main RAM is 8 cycles, but the reality is more complicated. There is a setup time and a termination time. The former means that when accessing the RAM, there will be some latency before it begins to return data (or to accept data, when writing). At this point, it's possible to access multiple consecutive addresses in a burst. When ending a burst, there is a minimum delay before you can access the RAM again: that's the termination time.

The interesting part is that after a main RAM access, the ARM7 is free to do other things during the burst termination time, as long as it doesn't try to access main RAM again - in that case, it would have to wait.

There are also other limitations and edge cases. For example, there is a limit on how long a burst can last. In practice, though, this particular limitation only matters for DMA. On the ARM7, consecutive data fetches (ie. LDM) are limited to 16 words, which isn't enough to hit the burst limit. Code fetches can hit it under very specific circumstances which are highly unlikely to occur in real world situations. And it doesn't apply to the ARM9 at all.

That's about it, though. The other memory regions are pretty straightforward timings-wise.

There is only one thing that's really missing to ARM7-side timings, besides the holy grail of main RAM conention, and that is audio DMA. The audio hardware accesses memory periodically, either to read sample data or to write captured data. Those memory accesses will incur stalls on the ARM7, and probably on DMA too.

Audio memory accesses are done in bursts of 4 words, through the FIFO system. melonDS emulates this part already, but ignores the timing aspect of it. Emulating this bit correctly would require deeper research into how the audio hardware operates and when it accesses memory.

Of course, I don't know how truly important it is, but it's worth keeping in mind. There are also several timing-related issues on the ARM7 side in DSi mode: the faster SPI clock isn't supported yet, timings for SD transfers are a big fat guess, I2C transfers and AES operations are instant.

So now, let's get to the main course: the ARM9.

The ARM9 is much more important, since that's where all the game logic runs. It's also more complex.

I've been banging my head against the numbers I've collected from my timing tests, trying to figure out the logic behind them. Jakly has been very helpful here, too.

Atleast, we've figured out the logic behind memory read instructions. It appears that the ARM9 can try to initiate the next code fetch early, but this doesn't always work as intended - sometimes resulting in weird extra latency, because every bus access must go through buffering.

The ARM9 also introduces us to interlocks.

For example, if you run a LDR instruction (32-bit memory read) on the ARM7, the CPU will retrieve the data from memory, then spend one more cycle storing that data into the desired register.

The ARM9 works the same way, except it will attempt to save time by starting the next instruction before LDR is actually done storing its result. But what if the next instruction tries to use the same register that LDR is supposed to write to? That's an interlock - this next instruction will need to wait for LDR to actually finish.

This is something that will need a great deal of testing. Supposedly, the ARM9 has "fast paths" meant to avoid interlocks in certain situations, so we need to figure out where this applies.

Also, fun observation: sometimes the ARM9 is just as hacky as the rest of the console!

Take LDRD, for example. This is a new instruction that has been added to the ARM9. It functions as a 64-bit memory read: it reads two words from memory to a pair of registers.

I have observed LDRD's timing characteristic long and hard, and it is evident to me that LDRD is basically LDR with a second memory read duct-taped at the end. This also means that the second memory read is nonsequential, which makes it inefficient.

By comparison, its counterpart STRD (64-bit memory write) seems more sane.

In general, all the memory write instructions seem sane - they don't have to worry about interlocks. I think the rest isn't too difficult - the instructions that don't touch memory are pretty straightforward. I haven't yet looked into the CP15 (system coprocessor) operations, though.

The issue with the ARM9 is going to be everything pertaining to memory. The caches. The separate busses. All that. For example, the ARM9 can continue running during a DMA transfer as long as it doesn't try to use the external bus. melonDS's very crude implementation of bus stalls doesn't support that.

This also brings me to the JIT.

At this point, it's a balancing act. There is a feeling that the JIT hinders further accuracy improvements. Understandable... the JIT is fundamentally inaccurate. To get a speed benefit, the JIT needs to operate on large enough code blocks, which doesn't mesh well with the way melonDS's scheduler works.

On the other hand, it seems pretty evident to me that removing the JIT would be massively unpopular. I'm against it.

I need to get more familar with the JIT's inner workings. At this point, I know the basic idea of how a JIT works, but I've never actually written one, which is a bit ironic for someone in my position.

What interests me is to see how far we can go with timings in the JIT. I imagine that a lot of the overall CPU timing model could be handled at compile time. On the other hand, I don't think emulating the ARM9 caches in the JIT would work terribly well, performance wise. This makes me wonder how much performance there would be to gain from a cached interpreter approach.

Food for thought.

Actual status update on timings -- by Arisotura

As promised.

Timings work is underway. I'm mostly done collecting numbers, minus stuff like CP15 operations and any special cases that might crop up. I started fixing up ARM7 timings.

Thing is, timings work isn't always easy. Collecting timing numbers is one thing, understanding the logic behind them is another thing entirely. Geometry engine timings, which I've worked on years ago, are a prime example of this: when you submit a display list to the geometry engine, the total execution time isn't simply the sum of each command's execution time. The reason for this is that the geometry engine has a pipeline: it can execute certain commands in parallel. It took me a lot of testing and number collecting to understand the logic behind it.

If the geometry engine, a simple 3D command processor, is already like that, you can imagine what a full-fledged CPU is going to be like. This is why CPU timings are an area of melonDS that has been more or less neglected for so long.

On the flip side, the DS isn't like older consoles such as the GameBoy or the NES, where games might rely on very precise timings in order to get the best out of the system's limited power, and they might crash if a certain event occurs 2 cycles too late. On the DS, from what I've seen, it's more about timings at the macro level, ie. how long a bigger operation takes. For example, consider a DMA transfer to copy a screen-sized bitmap, that is, 24576 words. A difference of 1 cycle in the initial DMA setup time doesn't matter, but a difference of 1 cycle per word transferred quickly adds up. Like here.

So, what are ARM7 timings like?

The ARM7 has a 3-stage pipeline, which means it can execute an instruction while decoding the next one and fetching the one after. So, for a lot of instructions, the execution time is capped by the access time for the memory region we're executing from. Some instructions may need more cycles to execute. Load and store instructions are also particular, since they access memory.

Different memory regions have different access times, depending on their data bus width and the type of memory access. Most of the available memory regions are integrated into the DS SoC, so they can be accessed in one cycle. VRAM has a 16-bit bus, so 32-bit accesses are broken into two 16-bit accesses and take two cycles.

External memory, however, has its own rules. There is a notion of nonsequential and sequential accesses. Basically, the memory being accessed may require a setup time for an initial access, but may be able to chain subsequent accesses in a faster way. The ARM7 makes use of this when fetching code: as long as a given instruction doesn't require extra cycles, the next one may be fetched in a sequential memory access, resulting in faster execution. Instructions like LDM and STM, which can load or store several words in a row, also make use of this.

Rules for the GBA slot ROM region are simple: the nonsequential and sequential access times are configured in EXMEMCNT. The data bus is 16-bit, so 32-bit accesses are broken down into two 16-bit accesses, where the second one is always sequential. The wifi card uses a similar interface, so the rules are the same.

Main RAM, however, is more complicated. To understand how the timings there work, you have to know how those RAM chips operate.

I mentioned my little Wii U gamepad project before, and it's relevant here: it involves a FPGA-based SPI FLASH emulator, which uses SDRAM to store firmware code. I first tried using spispy, but since it wasn't up to the task, I ended up making my own - including a simple SDRAM controller.

The DS and DSi use FCRAM, which is a bit different: from my understanding, you address FCRAM by sending the full address in one go, instead of having to send separate row and column addresses. Besides that, FCRAM seems to work in a fairly similar way.

For example, SDRAM supports burst modes: you send the address once and access several data units in a row (in my case, 16-bit units). Since burst accesses are typically constrained to a given memory block (which appears to be 32 bytes for the DS), a smart memory controller needs to know when to prepare the next burst ahead of time. There is also a delay to terminating a burst.

Furthermore, the DS also seems to have a hard limit on how long a main RAM burst can last. Presumably, since main RAM is shared, this serves to avoid having one side hogging it for too long.

So basically, when main RAM is involved, access times aren't just a matter of "how long does this particular access takes", the context around it has to be taken into consideration to some extent.

There is also, of course, the issue of main RAM contention: basically, when both ARM9 and ARM7 are trying to access main RAM at the same time, the memory controller will delay priorize one of the two, based on the EXMEMCNT priority setting. In practice, this setting seems to always be set to give priority to the ARM7.

The issue here is apparent if you consider how melonDS works. Basically, execution is split into bursts. The scheduler determines how long a burst should last: the length is based on the time until the next scheduled event, and capped at 64 cycles. The ARM9 is run for this many cycles, then the ARM7 is run until it has caught up, then scheduled events are executed if needed; rinse and repeat.

It is less accurate than running everything in a tight lockstep, but it's also way more efficient. In practice, DS games don't rely on extremely tight synchronization between the two CPUs, so doing things this way is fine.

However, it makes it impossible to model things such as main RAM contention. There is simply no way to tell whether the two CPUs are going to be accessing main RAM at the same time. It could be estimated based on heuristics, but that would be a big fat hack.

In practice, I don't yet know if anything relies on main RAM contention, besides the infamous DSi menu loader. That's a bit of a special case, since the ARM9 runs with caches off, and the two CPUs are actively competing for main RAM access. In most games, the ARM7 runs its code from internal WRAM, and the ARM9 has caches; however caches aren't perfect, and the sound hardware may be accessing main RAM too. I don't know how much main RAM contention matters in games, given this.

Then, since we're talking about the ARM9, there's this too.

Timings there are even more complicated. First, the ARM9 runs at 67 MHz, or 134 MHz on the DSi, but the bus still runs at 33 MHz. This means that when the ARM9 needs to access any memory outside of its own caches and TCM, additional delays are incurred: in hardware terms, crossing clock domains requires additional buffering.

Atleast, from what I've observed, setting the ARM9 clock to 67 MHz on the DSi yields identical timings to the DS, so there's that. However, setting it to 134 MHz results in several differences, so I have to figure out the logic behind that.

Then, the ARM9 is also a more complex beast in itself.

The caches can greatly help performance, considering how slow main RAM is. However, they also add complexity to the overall timings. For example, upon a cache miss, an entire cache line needs to be loaded, however the CPU may continue running while this happens, as long as it doesn't try to use the bus. That's what they call cache streaming. There's also a write buffer, which has its own mechanics too.

The ARM9 also has a longer pipeline, with 5 stages. This means some instructions may need more time to properly write their results to the CPU registers. Jakly said that there are special paths where a given instruction may send its result to the next instruction early if needed, but this doesn't cover every possible case.

For example, take the QADD instruction. It's a signed 32-bit addition with a sticky overflow flag. This instruction takes 1 cycle to execute, however it needs one extra cycle to actually write back its result to the destination register. So if the next instruction tries to use that register, it needs to wait one extra cycle.

I don't know all the details there. Jakly is more knowledgeable than I am, so I'll probably be relying on her to help figure out all the logic. The challenge is to implement it in a way that doesn't result in overbearing complexity and is optimizable to some extent.

As I said, at this point, I'm fixing up ARM7 timings. According to my tests, melonDS wasn't that far off, but the ARM7 still needs some fixes. I'll also need a lot more testing, in real world situations, not just specific instruction sequences repeated many times.

We'll also have to see what to do with the JIT. Surely, a lot of the overall timing model can be precomputed when compiling code blocks, so the JIT would have a decent advantage there. But I'm not sure about the more dynamic things, like the ARM9 caches. I'll have to see with Generic. But not before I've fininshed making all this work in the interpreter.

All in all, fun shit.

New direction for melonDS -- by Arisotura

[POST IS AN APRIL FOOLS JOKE]

It has appeared to us that after nearly 10 years of development, we are still very far from having a perfect DS emulator... thus, something has to be done. After all, you can't keep doing the same thing and expect different results, can you?

So we have been looking at those AI coding assistants.

We have seen Windows 11, and how the use of AI has helped turn it into a truly reliable, performant and user-friendly OS. We were originally doubtful about AI, as we always are towards any new technology, but we have to admit, it's pretty good.

So I first gave it a try.

I told Claude about the issues I was currently facing in melonDS, the timings work, and how difficult it all is. Claude offered a solution that at first seemed quite unorthodox, but actually makes a whole lot of sense.

After all, timing issues exist because timings exist. If you remove timings, no timing issues! That's pretty smart.

I offered to let Claude remove all timings from melonDS. It isn't perfect yet, but I observe that most problematic games are now running to some extent. I'm fairly confident that with more refining, we can solve the issue of timings once and for all.

The downside is that getting rid of timings basically means melonDS will be running everything as fast as possible, which requires quite a strong CPU. But not too strong, or your games will be running too fast. I haven't yet figured out how to make the framerate limiter work smoothly with timing-less emulation, but once again I'm confident Claude will come up with a genius solution.

And of course, we aren't going to stop at just timings. There is probably so much of melonDS that could be greatly simplified like that. I'm thinking of wifi emulation, for example.

If Claude doesn't manage on its own, we could also hire multiple AI agents and make them work as a team. Costs may be an issue, but for now we're doing well on that front. After all, it's true that AI consumes a lot of energy, but we believe that the cause of perfect DS emulation is worth this sacrifice.

So, sure this is an unexpected development, but I believe it will be great for melonDS and for DS emulation as a whole.

Chronicles of Timings: Tales of Destruction -- by Arisotura

Ah, timings. The infamous Horseman of Timings.

This post is going to be about a decision I'm taking for melonDS, but first, I'll write about my recent research regarding two old timing issues. I found it very interesting to dig into those games and figure out how they work.

1. Corrupted FMV audio in Over The Hedge - Hammy Goes Nuts

Basically, you start a game, and you have a bit of a video introducing the plot of the game... except the audio is covered in high-pitched beeps and loud screeching.

I first found that the game runs a video/audio decoder on the ARM9, which appears to have been largely written in ASM. The audio decoder writes its output in main RAM, where it gets sent to the audio hardware.

The way it works is interesting. The audio buffer size is calculated based on the length of the audio track, the audio frequency, and other parameters. In this case, the length is 30 blocks, one block being 128 samples. Then, the decoder is run until the buffer is filled to a certain point (23 blocks). At this point, audio playback is started. The ARM7 also starts a periodic alarm which will notify the ARM9 every time one block worth of audio has been played.

The alarm notification is used to run the audio decoder when needed. However, the audio decoder works in terms of bigger chunks: one chunk typically contains 6 blocks worth of audio. So the decoder attempts to process that many blocks in one go, but it stops processing new blocks when the output buffer is full.

The catch is that, as far as I can tell, when blocks are skipped, there is no logic to compensate for that. The next time the audio decoder runs, it will start working on the next chunk no matter what. Since the decoding process relies not only on the current input but also the previous output, skipping blocks breaks it. And that's what is happening on melonDS.

In this situation, the ARM9 needs to run slowly enough that it doesn't get too far ahead of audio playback, and doesn't fill the output buffer entirely.

2. Freeze during tutorials in Sonic Chronicles - The Dark Brotherhood

This is another problematic one. This game uses interactive videos to explain new combat mechanics, but those seem to give emulators quite the trouble. On melonDS, the freeze has manifested in different ways as timings were changed in one way or another, but it has remained so far, even though the recent changes to cartridge emulation helped.

The first observation was that the game crashes pretty badly: it jumps to a completely bogus address (the value of which is actually an ARM instruction), which means emulation derailed pretty bad. The crash comes from using an out-of-bounds index in a jump table.

Looking at the code where this happens, it appears that we are, once again, dealing with hand-written ASM. It is a bizarre system with long chains of jumps and loops. It is likely related to the tutorial videos, some kind of script interpreter or decoder, but its exact function isn't relevant to the crash. The crash occurs because garbage is being fed into the system.

After a bunch of backtracking, I was able to figure out what's going on.

This whole system loads video scripts from the ROM and feeds them into the bizarre interpreter, where presumably they get turned into what appears onscreen. (I'm not sure if the term "scripts" is correct, but for the explanation's sake.) The two operations are staggered: the loader starts loading the next script from the ROM, and while that is underway, will execute the current script.

To know where to load the next script, the loader needs to know how long the current script is. This is where the issue lies: the loader runs as soon as the previous script ends, and thus, may run while the current script is still being loaded, but doesn't check for that situation. It first determines the current script's length, then sets up the ROM transfer for the next script, which will wait for any previous transfer to finish. Due to this order of operations, there is a chance the loader will try to read the current script's length before it has been loaded. You guess how that goes.

This is a very similar problem to the above one: the ARM9 needs to run slow enough to never get too far ahead of the ROM transfer. In fact, since the ARM9 is mostly busy writing to memory, this seems to require data cache emulation.

There are other well-known timing issues, like the DSi Sound App crash, but I had already documented that one, so I won't do that again.

Notice a common trend? Those all stem from shoddy programming. The bugs go unnoticed because things happen to work on hardware, by pure luck. The same code on a modern system would inevitably crash, leading to the bugs being found and fixed. But, unlike modern systems, the DS has very deterministic timings.

So what do we do? We have been beating around the bush for too long here, and it's time to do something.

Basically, to emulate the ARM9 caches.

I was going to make an attempt at data cache emulation to see how it would go, when I was informed that there is already an old PR for cache emulation.

(to DesperateProgrammer: I'm sorry!! should have dealt with this way sooner)

I merged it into a separate branch, and fixed a couple bugs. So far, the results are encouraging. The first issue in this post seems to be entirely fixed. The second issue persists, but it's getting further in. The DSi Sound App is also functional.

I think the cache logic itself is good, but there are other improvements to be done as far as ARM9 timings are concerned. It will take some reworking, as the way melonDS handles memory timings isn't great. Of course, there's also room for optimization, but let's not get ahead of ourselves - get things working first, optimize later.

When I get this to a satisfactory state, I will compare performance against stock melonDS. I can see 3 possible profiles to compare: no cache emulation (stock melonDS), instruction cache only, both caches. Depending on how this turns out, there may or may not be options for different cache emulation profiles.

For example, I could imagine instruction cache emulation becoming the baseline. Since instruction fetches are very predictable, the instruction cache is a minor performance hit. Data fetches, however, are far more unpredictable, so data cache emulation might hurt performance too much to be part of the baseline. We'll see.

With this, we can hope to finally vanquish the Horseman of Timings. Well, not quite, there's still main RAM contention, but hopefully nothing beyond the DSi menu loader relies on that.

Fixes, and future of melonDS -- by Arisotura

Things have been difficult lately, mental health wise. However, despite this, we're still on a pretty good upward trend on that front.

Anyway, I mentioned the cartridge interface in the previous post, so I've been doing an accuracy-oriented revamp of this. I've already been through the technical details, so instead, I'll post about the outcome of this.

This fixes the freeze in Surviving High School, and likely other DSiWare titles.

This also fixes Rabbids Go Home: in DS mode, the antipiracy no longer kicks in, so the game Just Works(tm). Also, turns out this game skips the antipiracy check when running on a DSi, so that's why it was working fine in DSi mode.

I've had reports that this also fixes the crash in The World Ends With You, so that's great.

I've also been able to figure out why several games crash in DSi mode. Namely, games that aren't DSi-enhanced, but were built against recent SDK.

The code for reading the ROM retrieves the correct value for the ROMCTRL register from the ROM header, at 0x027FFE60. When running on a DSi, that address is adjusted to 0x02FFFE60, to account for the larger main RAM. Technically, 0x02FFFE60 would also work on the DS, due to mirroring; however, since it's not within any MPU region, trying to access it causes a data abort.

The issue was due to missing support for the SCFG access registers. Those registers allow to block access to specific DSi I/O ranges, which serves to implement DS backwards compatibility or restrict access to specific hardware components (for example, blocking cartridge games from accessing the NAND). In this case, games read SCFG_A9ROM and determine that they're running in DSi mode if the value is non-zero, but if access to SCFG registers is disabled, the value will be zero.

This brings me to ideas I have in mind for melonDS, and the direction things are going to go.

When making my changes to cartridge emulation, I ran into an issue with cart DMA transfers, which broke atleast one game. It's fixed now, but it highlights shortcomings in the way DMA triggers are done in melonDS. That code wasn't great in the first place, and it was modelled after DS DMA. Then when DSi support was added, the required support for NDMA was duct-taped to the existing system.

It does the job, but it's not great.

It also highlights some suboptimal practices: using magic numbers instead of enums, for example. It tends to make the code harder to read.

Since we're talking about the codebase, it's no secret that I'm not a fan of how it has evolved in some aspects. The big 1.0 refactor has exacerbated some of those issues, as making the codebase support something it wasn't originally meant for (running multiple DS instances within one process) required a major overhaul.

For example: originally, some components in melonDS were modelled as C++ classes when they needed to be instantiated more than once (for example, the 2D renderers). The rest were simple namespaces. But suddenly, everything had to be remodelled as a class. We ran into issues due to the naming convention used in melonDS.

So we're going to fix that by making changes to the naming convention. This is something that can be done progressively, since unlike the 1.0 refactor, it doesn't leave the codebase in a broken state until it's complete. However, it's going to have implications for people who have currently open pull requests. This change will definitely take some planning.

I also want to use proper enums and make the code more readable, as I said. I also want to organize the source directory better, which I've already been doing: for example, I put the DSP HLE modules in their own subdirectory, I also did the same to the various NDS cartridge implementations, ...

This sort of stuff isn't very exciting to the end user, but to us, it can make a big difference - making the codebase easier to read, easier to maintain, and overall more pleasant to work with. Some areas of the codebase kind of kill my motivation when I have to touch them.

I also have other things in mind for melonDS.

For example, I'm brainstorming ideas to make DSi emulation a smoother experience. We're at a point where we could remove the "experimental" disclaimer that's on it, but there's still a lot to do.

Since the NAND is mostly a FAT volume, I'd like to experiment with syncing that to a folder, like we do for DLDI and such. I want to experiment with such things as bigger NAND images, a smoother process for launching DSiWare titles, and so on. I'm even pondering what it would take to build a NAND from scratch, and ideas like a custom DSi menu.

Launching things is something that could use an overhaul in general. On the DS, games can only ever be launched through the cartridge interface, so melonDS was built around this. However, on the DSi, they can also be loaded from the NAND (DSiWare), or even from the SD card (homebrew, CFW, ...). melonDS still doesn't really offer good support for this.

Which brings me to the UI.

I have some ideas in mind for a more flexible configuration system. Currently, melonDS applies the same configuration to every game you run, and it's becoming apparent that it's too limited for the various possibilities the DS offers.

One idea is a game list interface, like Dolphin or PCSX2. The main concern with such an UI would be how to make it work well when our minimum window size is 256x384. But such an interface could offer a way to set up per-game configuration, cheats and such, all which are kind of cumbersome to do with the current UI.

Another possibility could be profiles: whether those would be emulator-wide or per-game, I don't know yet, but they could be a way to support loading different settings, different save files, and so on.

We could even support custom screen layouts, stuff like rendering two melonDS instances within one window simulating split-screen multiplayer, etc...

We could also try to implement Retroachievements in a nice way with such an interface, seeing as that feature is popular request.

Many ideas, but it takes time to code any of this. So I don't know how far we'll get.

Oh, and, the OpenGL renderer. I've been taking a break from that stuff, but I want to try to add filtering before the 1.2 release.

Last thing I want to talk about: timings.

I want to try to address the known timing issues in some way.

The problem is mostly when CPU timings are involved. With things like cartridge transfer timings, it's generally not that hard once you understand the logic behind the timings, and model it decently accurately. CPU timings, on the other hand, are something else entirely: they rely on a lot of different factors. In the DS, you have the ARM9 caches, shared main RAM, various ways the ARM9 itself can save a few cycles here and there...

Fully modelling the complexity of CPU timings would require cycle-accurate emulation. melonDS is not cycle-accurate, and was never built for that. However, Jakly is attempting cycle-accurate DS emulation in DualSOUP. I'm definitely interested to see whether cycle-accurate DS emulation can be achieved at playable speeds with current hardware, so I'm following this project with great interest.

The melonDS approach so far is to figure out the underlying logic and try to get close - because if you don't understand that logic, then any attempts you make end up in a game of whack-a-mole. I remember trying to understand the logic behind ARM9 timings, but realizing the sheer complexity, and not really getting anywhere.

However, one thing we can do is add in instruction cache emulation. There have been attempts at this and they seem to fix some issues, like for example the DSi Sound App.

Why specifically the instruction cache? The ARM9 has two caches: an instruction cache and a data cache. The former is used when fetching program code, and the latter is used when accessing data in memory.

Emulating the instruction cache is actually feasible with little to no performance loss, because instruction fetches are very predictable: they're sequential unless a branch is taken. Thus, it's possible to only check the cache upon cache line boundaries and branches, and assume that every other fetch is a cache hit. Emulating the data cache is worse for performance because data fetches are unpredictable, so every memory access needs to be checked against the cache.

So I think this is worth considering for melonDS 1.2.

That's about it for now. Have fun!

The DS cartridge interface: endless fun -- by Arisotura

Depending on your definition of fun, of course.

This is a bit of a pace change from all the recent OpenGL stuff: this is going to be some hardware infodumping slash juicy technical post. It all started with this bug report: Bug: Surviving High School (DSiWare) not booting.

Basically, this DSi game gets stuck on a black screen. This bug report piqued my interest, and oh boy, I had no idea what kind of rabbit hole it would be.

I first started by doing what I do in order to troubleshoot emulation bugs: track where each CPU is hanging around at, dump RAM, throw it into IDAPro. This gives me an idea what the game is doing, and hopefully provides a lead on the bug. Another possibility is to try modifying the cache timing constants to probe for a timing bug, but this made no difference here.

In this situation, the ARM9 was running an idle loop, but the ARM7 was stuck in an endless loop. Backtracking from this, I found that the game was reading the cartridge's chip ID, comparing it against the value stored at 0x02FFFC00, and panicking because the two were different. This code isn't atypical for a DS game...

But... wait?!

This game is a DSiWare title! There is no cartridge here.

No idea why it's reading the cartridge chip ID. Maybe the game was intended to be released in physical form? But in this case, the chip ID stored at 0x02FFFC00 is zero. When no cartridge is inserted, melonDS returns 0xFFFFFFFF instead of zero, hence one part of the bug.

Changing melonDS to return zero when no cartridge is inserted would fix the bug, but only when there's indeed no cartridge. If there's one, it will fail. This is because when booting a DSiWare title, the DSi menu forcefully powers off the cartridge if there's one inserted. melonDS doesn't emulate this bit.

This was the start of a longer journey, which ended up becoming its own branch, again. I don't like the way the cartridge code in melonDS has evolved over time.

On one hand, it was built quickly to answer simple needs in simpler times, and things were kind of just piled together. The object-oriented implementation of different cartridge types was a good idea, but they were kind of jammed into one code file, which turned into a bit of a mess.

With DSP HLE, I started experimenting with using subdirectories for specific areas of the emulator, rather than just dumping all the code files into one directory. I thought that doing the same for the cartridge code, after splitting it into separate files, was a good idea. Other areas of the emulator could use this kind of reorganization too, but one thing at a time.

On the other hand, the cartridge interface implementation in melonDS is technically wrong. This is where the fun begins.

In the DS, the cartridge interface is the piece of hardware that sits between the CPU and the cartridge. It can operate in two modes: ROM and SPI. ROM mode uses all the data lines to transfer one byte per cycle, and is typically used to access the ROM. SPI mode uses two data lines as a SPI interface, and is typically used to access the save memory.

The cartridge interface's registers are exposed to both ARM9 and ARM7, but only one CPU may use it at a given time - which CPU gets access is selected by bit 11 in EXMEMCNT. This is a rather important detail - if you happen to remember the old times, we've had a rather evil bug related to this.

I assumed that the cart interface was one set of registers, one hardware block, and that it was accessible to either one or the other CPU. But that's not how this works, actually.

Each CPU has its own register set, and its own cart interface hardware block. Technically, both sides are always functional, and can run transfers at the same time. The EXMEMCNT access right setting merely controls which one is connected to the actual cartridge - the other one will just be reading 0xFF bytes.

Another detail that is wrong is timings.

The way communication works in ROM mode is that you send a 8-byte command to the cartridge, and optionally send or receive data. For example, to retrieve the cart's chip ID, you send a command with the first byte set to 0xB8, then receive one data word: the chip ID.

Since the interface transfers one byte per cycle, it takes 8 cycle to transmit the full command, and 4 cycles per data word transferred. Since the cart's ROM chip may need extra time to fulfill the command, It is also possible to configure delays before the data transfer, and between each 512 bytes of data. The delays are specified in cycles. The duration of a cycle can be either 5 or 8 system cycles (ie 33.51 MHz).

Cartridge transfer delays in melonDS were modelled based on this. However, the model is missing one simple fact, and this ends up making cart transfers slower than they should be.

Jakly figured out that there is buffering involved. Basically, when a data word is transferred from the cartridge, it is placed in a buffer where it may be read out (at 0x04100010), and the DRQ signal is raised to notify the CPU (or DMA). However, the buffer can actually store two words worth of data, so when one data word is available, the interface can start transferring another word, rather than having to wait for the CPU to read out the data.

This buffering scheme is definitely worth implementing. In melonDS, the lack of buffering ends up adding about 400 cycles per 512 bytes of data transferred.

I remember that Rabbids Go Home suffers from a related issue. Basically, the game runs several ROM transfers and measures how long they take. The total needs to fall within a certain range, or the anti-piracy kicks in and the game freezes. So implementing buffering into melonDS might fix this.

I've also been finding out that write transfers (when transferring data to the cartridge) suffer from a couple hardware bugs, too. For example, under certain specific circumstances, the cart interface may accidentally send out one data word despite the buffer being empty. Some of this is worth documenting, but not necessarily worth emulating, atleast for now.

Then you have the DSi, which has two cartridge interfaces.

Yes, you read this right.

Basically, the DSi was planned to have two cartridge slots. In the end, Nintendo didn't like it, so it was scrapped.

The functionality is still part of the retail DSi. The second cartridge interface is completely functional. It even appears that the retail SoC has the required signals for a second cart slot, but they aren't connected to anything on the motherboard.

So how does this all work?

The second cart interface has the same register layout as the first one. The registers may be found at 0x040021A0 instead of 0x040001A0 (and 0x04102010 instead of 0x04100010 for the transfer buffer). There are also new IRQ lines for it (IRQ 26 and 27, instead of 19 and 20 respectively), a new DMA trigger line, and a separate CPU access setting in EXMEMCNT (bit 10). This cart interface can't be used with old DMA, but NDMA has an adequate trigger mode for it (0x05).

Register SCFG_MC was added to control the cartridge slots. Each cartridge may be turned on or off in software. This is used to reset the cartridge - initializing a DSi cartridge requires a reset, but the original interface provided no way to do that in software. SCFG_MC also serves to turn off the cartridge when loading DSiWare, for example.

An empty cartridge slot is also forced off by hardware. The nonexistant second slot is considered to always be empty, so it is always off.

It's also worth noting that the on/off status applies to the cartridge itself. The cart interface is functional regardless, but it will read 0x00 bytes if the cart is off.

SCFG_MC also provides another setting to swap the two cartridge interfaces. The point would be that games could be loaded from either cart slot without needing to know which slot is in use, and it would also guarantee backwards compatibility with DS games. This setting merely swaps the address at which each cart interface appears, as well as the IRQ lines and all.

So all in all, this requires some foundational changes to the way cartridge stuff is emulated in melonDS. As well as a lot of hardware testing to figure out every detail, answer every question that is raised along the way, and so on.

But, I hope, the improved accuracy will be worth it.

Little status update -- by Arisotura

So yeah, it's been a while since the new OpenGL renderer was merged in...

Haven't been very active with melonDS since then. I've mostly been taking a well-deserved break from all this intensive coding.

Real life is catching up, too. Mental health stuff. Things coming up that I have to take care of.

Add a minor Hytale addiction to the mix, and... yeah.

I'm going to post a few notes about the future of melonDS at large.

First, I've been toying with a Golden Sun hack for the OpenGL renderer.

(click for full-size version)

Flicker-free hi-res. When I had first attempted it, there was some flickering, which was caused by color conversion issues that have since been fixed, so I figured I could give it another try.

It is a gross hack, but a nice proof of concept regardless. It doesn't address the performance issue (which will need a separate fix), but it fixes up the upscaling issue by replicating what Golden Sun does in a high-level manner.

I could envision adding a more refined version of this hack into the renderer. After all, the OpenGL renderer is a big hack in itself - upscaling is not something the DS can do. As long as the accurate path (in this case, the software renderer) is hack-free, this can slide.

Next, I've also been working on the melonDS site.

One of my ideas is to add a wiki, which would keep all melonDS-related information, help pages, and such, in a less awkward way than this site does. I made a test run locally with Dokuwiki - so far, I ported the melonDS site skin to it, and added a custom authentication plugin, so that it functions with the same user accounts as the rest of the site.

I'm not quite sure whether to keep going with this, or use Mediawiki... I think Dokuwiki will be fine for what this is, and I'd prefer something that's light on the server.

I also have some plans in mind to get the community more involved into melonDS related affairs. The wiki, the documentation aspect, is one of them.

I also have ideas in mind for the main site (which you are currently looking at), but I haven't yet done any work there. I want to rework the home page, to better present melonDS and what it's good for, make it more clear which version is the latest release, and so on. I might move the blog to a separate page, maybe only include certain posts on the homepage...

I also want to add better ways to search the blog. Categorization, sorting by date, maybe a search function. This stuff didn't feel very necessary back in 2016, but now the blog has been operating for nearly 10 years, and there's a lot of content. It can get hard to look for a specific post.

Which also brings me to quality-of-life enhancements to the blog itself. Some kind of formatting toolbar or reference - as it is now, you can use basic BBCode in comments, but there is no reference as to what is possible. Maybe Markdown support would be good to have, instead. I don't know. What I definitely want to add, however, is attachment support, much like what the board has had for a while. It wouldn't be meant for user comments, but would greatly help when writing posts with images and such.

Web development is my least favorite area, so I've been giving myself time to develop those ideas before I actually make attempts (or, in other words, being lazy :P ).

Another idea would be to make the IRC/Discord chats more prominent, so you don't have to look into the forum to find them.

Which brings me to the other point of concern coming up: Discord.

If you've been following the news, you might know that they plan to add age verification. Which is basically a thinly veiled pretext to collect your ID or face.

I could envision a few outcomes to this:

1. In the face of public outcry, Discord backs off, and the status quo is maintained... atleast for now.

2. People move en masse to another chat platform.

3. People complain for a while but just adapt to the new situation, due to the lack of good alternatives.

4. A mix of 2 and 3, with people moving to different chat platforms.

Seeing as I'm present in several different servers, I'm not exactly looking forward to having to maintain presence on several different chat platforms due to this. But having to provide identifying information to Discord isn't appealing either. It's not like they don't have a track record of security breaches.

At this point, I don't yet know what to do with the Kuribo64 Discord server. I intend to wait and see, to go with the flow. So far, we don't really have a good alternative to Discord in mind. Moving will inevitably cut off some of the community, and I want to minimize that.

Seeing as we are still present on IRC, this may pick up some activity, who knows. But the problem with IRC is that of accessibility, especially when compared to Discord. You miss activity when you're not connected, unless you use a BNC (which requires a server to run it on). You can't edit or delete posts. You can't embed files either, you have to use an external service for that. As far as user-facing features go, you get what comes with your client of choice.

IRC however has the advantage of being decentralized. Unlike a platform like Discord, "IRC" is not owned by one big entity, because IRC is just the protocol. If your IRC server of choice starts enshittifying, you move to a different server, and that's it - it's nothing like moving to a whole different platform.

If only there was a modern version of IRC, that could address the shortcomings...

So, yeah. Wait and see, I guess.

That's it for now. When those things advance more, I'll keep you updated.

blackmagic3 merged! -- by Arisotura

After much testing and bugfixing, the blackmagic3 branch has finally been merged. This means that the new OpenGL renderer is now available in the nightly builds.

Our plan is to let it cool down and potentially fix more issues before actually releasing melonDS 1.2.

So we encourage you to test out this new renderer and report any issues you might observe with it.

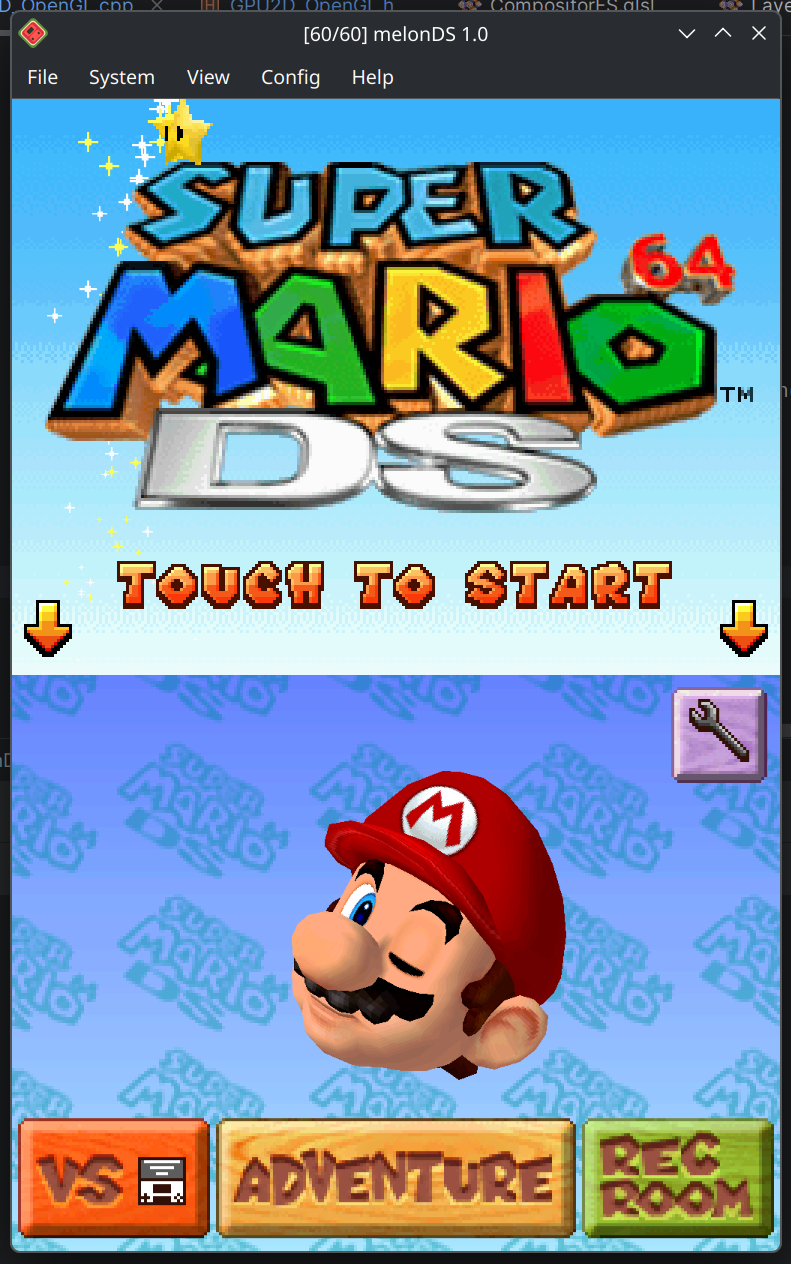

There are issues we're already aware of, and for which I'm working on a fix. For example, FMVs that rely on VRAM streaming, or Golden Sun. There may be tearing and/or poor performance for now. I have ideas to help alleviate this, but it'll take some work.

There may also be issues that are inherent to the classic OpenGL 3D renderer, so if you think you've encountered a bug, please verify against melonDS 1.1.

If you run into any problem, we encourage you to open an issue on Github, or post on the forums. The comment section on this blog isn't a great place for reporting bugs.

Either way, have fun!

Golden Sun: Dark Destruction -- by Arisotura

It's no secret that every game console to be emulated will have its set of particularly difficult games. Much like Dolphin with the Disney Trio of Destruction, we also have our own gems. Be it games that push the hardware to its limits in smart and unique ways, or that just like to do things in atypical ways, or just games that are so poorly coded that they run by sheer luck.

Most notably: Golden Sun: Dark Dawn.

This game is infamous for giving DS emulators a lot of trouble. It does things such as running its own threading system (which maxes out the ARM9), abusing surround mode to mix in sound effects, and so on.

I bring this up because it's been reported that my new OpenGL renderer struggles with this branch. Most notably, it runs really slow and the screens flicker. Since the blackmagic3 branch is mostly finished, and I'm letting it cool down before merging it, I thought, hey, why not write a post about this? It's likely that I will try to fix Golden Sun atleast enough to make it playable, but that will be after blackmagic3 is merged.

So, what does Golden Sun do that is so hard?

It renders 3D graphics to both screens. Except it does so in an atypical way.

If you run Golden Sun in melonDS 1.1, you find out that upscaling just "doesn't work". The screens are always low-res. Interestingly, in this scenario, DeSmuME suffers from melonDS syndrome: the screens constantly flicker between the upscaled graphics and their low-res versions. NO$GBA also absolutely hates what this game is doing.

So what is going on there?

Normally, when you do dual-screen 3D on the DS, you need to reserve VRAM banks C and D for display capture. You need those banks specifically because they're the only banks that can be mapped to the sub 2D engine and that are big enough to hold a 256x192 direct color bitmap. You need to alternate them because you cannot use a VRAM bank for BG layers or sprites and capture to it at the same time, due to the way VRAM mapping works.

However, Golden Sun is using VRAM banks A, B and C for its textures. This means the standard dual-screen 3D setup won't work. So they worked around it in a pretty clever way.

The game renders a 3D scene for, say, the top screen. At the same time, this scene gets captured to VRAM bank D. The video output for the bottom screen is sourced not from the main 2D engine, but from VRAM - from bank D, too. That's the trick: when using VRAM display, it's possible to use the same VRAM bank for rendering and capturing, because both use the same VRAM mapping mode. In this situation, display capture overwrites the VRAM contents after they're sent to the screen.

At this point, the bottom screen rendered a previously captured frame, and a new 3D frame was rendered to VRAM, but not displayed anywhere. What about the top screen then?

The sub 2D engine is configured to go to the top screen. It has one BG layer enabled: BG2, a 256x256 direct color bitmap. VRAM bank H is mapped as BG memory for the sub 2D engine, so that's where the bitmap is sourced from.

Then for each frame, the top/bottom screens there are swapped, and the process is repeated.

But... wait?

VRAM bank H is only 32K. You need atleast 96K for a 256x192 bitmap.

That's where this gets fun! Every time a display capture is completed, the game transfers it to one of a set of two buffers in main RAM. Meanwhile, the other buffer is streamed to VRAM bank H, using HDMA. Actually, only a 512 byte part of bank H is used - the affine parameters for BG2 are set in such a way that the first scanline will be repeated throughout the entire screen, and that first scanline is what gets updated by the HDMA.

All in all, pretty smart.

The part where it sucks is that it absolutely kills my OpenGL renderer.

That's because the renderer has support for splitting rendering if certain things are changed midframe: certain settings, palette memory, VRAM, OAM. In such a situation, one screen area gets rendered with the old settings, and the new ones are applied for the next area. Much like the blargSNES hardware renderer.

The renderer also pre-renders BG layers, so that they may be directly used by the compositor shader, and static BG layers don't have to be re-decoded every time.

But in a situation like the aforementioned one, where content is streamed to VRAM per-scanline, this causes rendering to be split into 192 sections, each with their own VRAM upload, BG pre-rendering and compositing. This is disasterous for performance.

This is where something like the old renderer approach would shine, actually.

I have some ideas in mind to alleviate this, but it's not the only problem there.

The other problem is that it just won't be possible to make upscaling work in Golden Sun. Not without some sort of game-specific hack.

I could fix the flickering and bad performance, but at best, it would function like it does in DeSmuME.

The main issue here is that display captures are routed through main RAM. While I can relatively easily track which VRAM banks are used for display captures, trying to keep track of this through other memory regions would add too much complexity. Not to mention the whole HDMA bitmap streaming going on there.

At this rate it's just easier to add a specific hack, but I'm not a fan of this at all. We'll have to see what we do there.

Still, I appreciate the creativity the Golden Sun developers have shown there.

Becoming a master mosaicist -- by Arisotura

So basically, mosaic is the last "big" feature that needs to be added to the OpenGL renderer...

Ah, mosaic.

I wrote about it here, back then. But basically, while BG mosaic is mostly well-behaved, sprite mosaic will happily produce a fair amount of oddities.

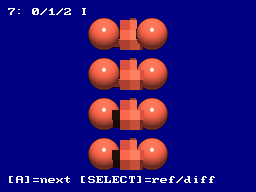

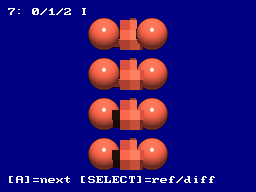

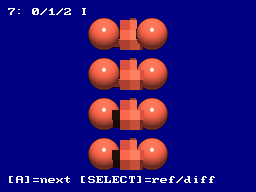

Actual example, from hardware:

I had already noticed this back then, as I was trying to perfect sprite mosaic, but didn't quite get there. I ended up putting it on the backburner.

Fast forward to, well, today. As I said, I need to add mosaic support to the OpenGL renderer, but before doing so, I want to be sure to get the implementation right in the software renderer.

So I decided to tackle this once and for all. I developed a basic framework for graphical test ROMs, which can setup a given scene, capture it to VRAM, and compare that against a reference capture acquired from hardware. While the top screen shows the scene itself, the bottom screen shows either the reference capture, or a simple black/white compare where any different pixels immediately stand out.

I built a few test ROMs out of this. One tests various cases, ranging from one sprite to several, and even things like changing REG_MOSAIC midframe. One tests sprite priority orders specifically. One is a fuzzing test, which just places a bunch of sprite with random coordinates and priority orders.

I intend to release those test ROMs on the board, too. They've been pretty helpful in making sure I get the details of sprite mosaic right. Talking with GBA emu developers was also helpful.

Like a lot of things, the logic for sprite mosaic isn't that difficult once you figure out what is really happening.

First thing, the 2D engine renders sprites from first to last. This kind of sucks from a software perspective, because sprite 0 has the highest priority, and sprite 127 the lowest. For this reason, melonDS processed sprites backwards, so that they would naturally have the correct priority order without having to worry about what's already in the render buffer. But that isn't accurate to hardware. This part will be important whenever we add support for sprite limits.

What is worth considering is that when rendering sprites, the 2D engine also keeps track of transparent sprite pixels. If a given sprite pixel is transparent, the mosaic flag and BG-relative priority value for this pixel are still updated. However, while opaque sprite pixels don't get rendered if a higher-priority opaque pixel already exists in the buffer, transparent pixels end up modifying flags even if the buffer already contains a pixel, as long as that pixel isn't opaque. This quirk is responsible for a lot of the weirdness sprite mosaic may exhibit.

The mosaic effect itself is done in two different ways.

Vertical mosaic is done by adjusting the source Y coordinate for sprites. Instead of using the current VCount as a Y coordinate, an internal register is used, and that register gets updated every time the mosaic counter reaches the value set in REG_MOSAIC. All in all, fairly simple.

Horizontal mosaic, however, is done after all the sprites are rendered. The sprite buffer is processed from left to right. For each pixel, if it meets any of a few rules, its value is copied to a latch register, otherwise it is replaced with the last value in the latch register. The rules are: if the horizontal mosaic counter reaches the REG_MOSAIC value, or if the current pixel's mosaic flag isn't set, or if the last latched pixel's mosaic flag wasn't set, or if the current pixel has a lower BG-relative priority value than the last latched pixel.

Of course, there may be more interactions we haven't thought of, but these rules took care of all the oddities I could observe in my test cases.

Now, the fun part: implementing this shit into the OpenGL renderer is going to be fun. As I said before, while this algorithm makes sense if you're processing pixels from left to right, that's not how GPU shaders work. I have a few ideas in mind for this, but the code isn't going to be pretty.

But I'm not quite there yet. I still want to make test ROMs for BG mosaic, to ensure it's also working correctly, and also to fix a few remaining tidbits in the software renderer.

Regardless, it was fun doing this. It's always satisfying when you end up figuring out the logic, and all your tests come out just right.

blackmagic3: refactor finished! -- by Arisotura

The blackmagic3 branch, a perfect example of a branch that has gone way past its original scope. The original goal was to "simply" implement hi-res display capture, and here we are, refactoring the entire thing.

Speaking of refactor, it's mostly finished now. For a while, it made a mess of the codebase, with having to move everything around, leaving the codebase in a state where it wouldn't even compile, it even started to feel overwhelming. But now things are good again!

As I said in the previous post, the point of the refactor was to introduce a global renderer object that keeps the 2D and 3D renderers together. The benefits are twofold.

First, this is less prone to errors. Before, the frontend had to separately create 2D and 3D renderers for the melonDS core, with the possibility of having mismatched renderers. Having a unique renderer object avoids this entirely, and is overall easier to deal with for the frontend.

Second, this is more accurate to hardware. Namely, the code structure more closely adheres to the way the DS hardware is structured. This makes it easier to maintain and expand, and more accurate in a natural kind of way. For example, implementing the POWCNT1 enable bits is easier. The previous post explains this more in detail, so I won't go over it again.

I've also been making changes to the way 2D video registers are updated, and to the state machine that handles video timing, with the same aim of more closely reflecting the actual hardware operation. This will most likely not result in tangible improvements for the casual gamer, but if we can get more accurate with no performance penalty, that's a win. Reason is simple: in emulation, the more closely you follow the original hardware's logic and operation, the less likely you are to run into issues. But accuracy is also a tradeoff. I could write a more detailed post about this.

If there are any tangible improvements, they will be about the mosaic feature, especially sprite mosaic, which melonDS still doesn't handle correctly. Not much of a big deal, mosaic seems seldom used in DS games...

Since we're talking about accuracy, it brings me to this issue: 1-byte writes to DISPSTAT don't work.

A simple case: 8-bit writes to DISPSTAT are just not handled. I addressed it in blackmagic3, as I was reworking that part of the code. But it's making me think about better ways to handle this.

As of now, melonDS has I/O access handlers for all possible access types: 8-bit, 16-bit, 32-bit, reads and writes. It was done this way so that each case could be implemented correctly. The issue, however, is that I've only implemented the cases which I've run into during my testing, since my policy is to avoid implementing things without proper testing.

Which leads to situations like this. It's not the first time this happens, either.

So this has me thinking.

The way memory reads work on hardware depends on the bus width. On the DS, some memory regions use a 32-bit bus, others use a 16-bit bus.

So if you do a 16-bit read from a 32-bit bus region, for example, it is internally implemented as a full 32-bit read, and the unneeded part of the result is masked out.

Memory writes work in a similar manner. What comes to my mind is the FPGA part of my WiiU gamepad project, and in particular how the SDRAM there works, but the SDRAM found in the DS works in a pretty similar way.

This type of SDRAM has a 16-bit data bus, so any access will operate on a 16-bit word. Burst modes allow accessing consecutive addresses, but it's still 16 bits at a time. However, there are also two masking inputs: those can be used to mask out the lower or upper byte of a 16-bit word. So during a read, the part of the data bus which is masked out is unaffected, and during a write, it is ignored, meaning the corresponding bits in memory are untouched. This is how 8-bit accesses are implemented.

On the DS, I/O regions seem to operate in a similar fashion: for example, writes smaller than a register's bit width will only update part of that register. However, I/O registers are not just memory: there are several of them which can trigger actions when read or written to. There are also registers (or individual register bits) which are read-only, or write-only. This is the part that needs extensive testing.

As a fun example, register 0x04000068: DISP_MMEM_FIFO.

This is the feature known as main memory display FIFO. The basic idea is that you have DMA feed a bitmap into the FIFO, and the video hardware renders it (or uses it as a source in display capture). Technically, the FIFO is implemented as a small circular buffer that holds 16 pixels.

So writing a 32-bit word to DISP_MMEM_FIFO stores two pixels in the FIFO, and advances the write pointer by two. All fine and dandy.

However, it's a little more complicated. Testing has shown that DISP_MMEM_FIFO is actually split into different parts, and reacts differently depending on which parts are accessed.

If you write to it in 16-bit units, for example, writing to the lower halfword stores one pixel in the FIFO, but doesn't advance the write pointer - so subsequent writes to the same address will overwrite the same pixel. Writing to the higher halfword (0x0400006A) stores one pixel at the next write position (write pointer plus one), and advances the write pointer by two.

Things get even weirder if you write to this register in 8-bit units. Writing to the first byte behaves like a 16-bit write to the lower halfword, but the input value is duplicated across the 16-bit range (so writing 0x23 will store a value of 0x2323). Writes to the second byte are ignored. Writes to the third byte behave like a 16-bit write to the upper halfword, with the same duplicating behavior, but the write pointer doesn't get incremented. Writing to the fourth byte increments the write pointer by two, but the input value is ignored.

You can see how this register was designed to be accessed in either 32-bit units, or sequential 16-bit units, but not really 8-bit units - those get weird.

Actually, this kind of stuff looks weird from a software perspective, but if you consider the way the hardware works, it makes sense.